I’ve seen a lot of buzz about Grubby AI Humanizer and I’m trying to figure out if it’s actually worth using. I tested it on some AI-written content and the output looked more human, but I’m worried about detection tools, originality, and long‑term SEO risks. Can anyone share real experiences with this tool, how reliable it is against AI detectors, and whether it’s safe to use for blogs or client work without getting penalized?

Grubby AI Humanizer

I spent some time messing with Grubby AI here

I went in because of the whole “detector mode” thing for GPTZero, ZeroGPT, and Turnitin. On paper it sounds neat. In use, it was all over the place.

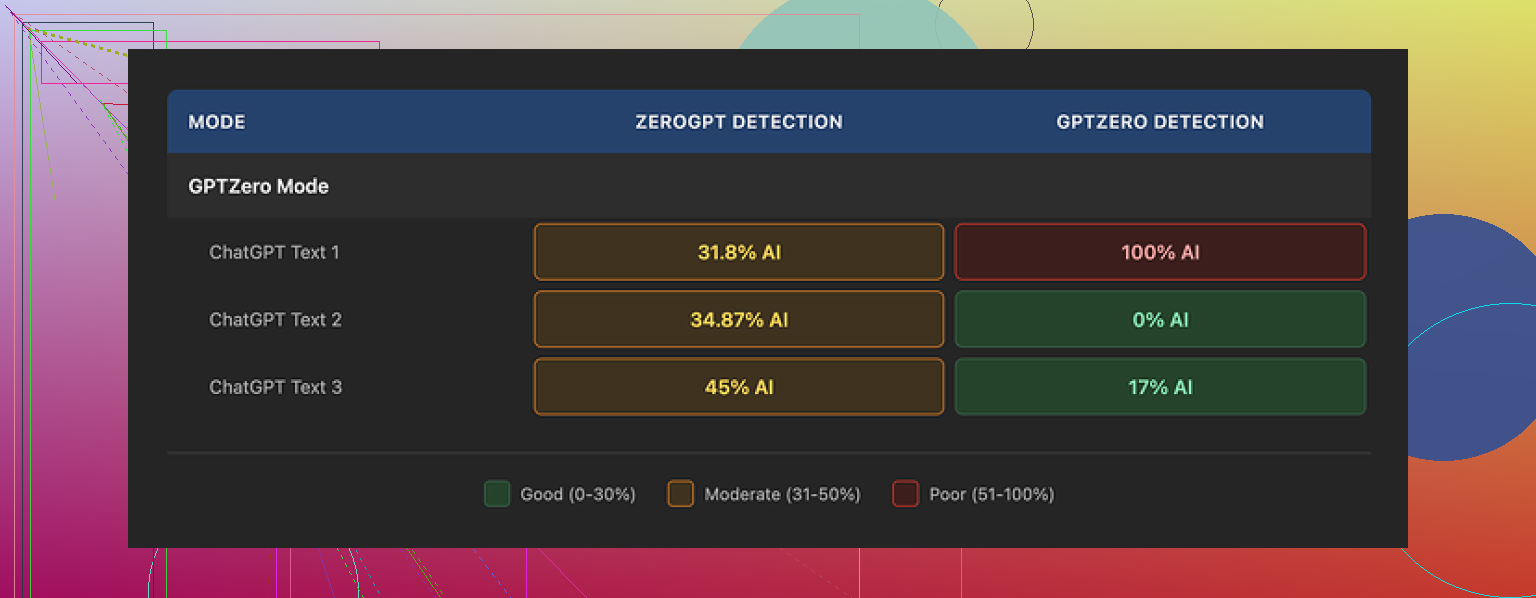

Here is what happened for me with the GPTZero mode:

• Sample 1: GPTZero scored it 0% AI

• Sample 2: GPTZero said 17% AI

• Sample 3: GPTZero flagged it 100% AI

Yes, 100%, using the mode that is supposed to target that exact detector.

The weird part was their own Detection tab. Every output I tried showed “Human 100%” on all seven listed detectors. Every. Single. Time. Meanwhile, when I pasted the same text into the real detectors, scores did not match at all.

Quality-wise, I would put the humanized text around 6.5 out of 10.

What it did well for me:

• No em dashes, which a lot of similar tools miss and those tend to trigger some detectors

• No fake words

• No obvious nonsense sentences

Where it slipped:

• Some sentences turned weirdly long and stiff, like a school essay that tries too hard

• Word choices felt off in spots, for example it used “distinction” where “nuance” made more sense from context

The part I did like was the editor. You stay in one interface, click a word, and swap in a synonym. Or you highlight a whole paragraph and rehumanize it again. That saved me a bit of time compared to copy pasting between tools.

Pricing when I tested:

• Free tier: total of 300 words, not per day, total

• Essential: 9.99 dollars per month, only gives you Simple mode

• Pro: 14.99 dollars per month on a yearly plan, that one includes the detector-specific modes

After playing with it side by side against Clever AI Humanizer on the same prompts and pasting into the same detectors, I kept seeing Clever AI Humanizer hold up better, and it stayed free for what I needed.

The short version of my experience: if your goal is to reduce AI flags on GPTZero or Turnitin, Grubby AI felt unreliable across runs. The editor is useful, the text is passable if you plan to edit by hand anyway, but I ended up sticking with Clever AI Humanizer from here:

Short answer for me, it is not worth it if your main goal is detector evasion.

I tried Grubby AI Humanizer on about 15 samples. Mixed sources. Blog posts, essays, and short answers. I saw similar behavior to what @mikeappsreviewer described, but I had a few different takeaways.

Here is how it went for me.

- Detection performance

I ran everything through:

- GPTZero

- ZeroGPT

- Turnitin (via a uni account)

- Originality AI

Pattern I saw:

- Some pieces dropped from 90 to under 20 percent AI on GPTZero.

- Some barely moved.

- A few went up. One went from 65 to 98 percent on Originality AI after humanizing.

Turnitin was the most stubborn. On three student style essays, Grubby lowered the AI score a little, but it never removed the AI flag completely.

Their internal “Detection” tab told me 100 percent human every single time too. I do not trust that at all. It looks like a marketing screen, not a real test.

If your risk tolerance is low, this is a problem. You cannot predict what a given run will do.

- Text quality

Quality for me was closer to 5 out of 10 than 6.5.

Good points:

- It removed some obvious GPT rhythm.

- It broke up a few robotic sentence patterns.

- It avoided fake words and glitchy nonsense.

Weak points:

- Tone drift. I fed in casual content. It came out like a stiff school essay.

- Repeated weird phrasing. I saw “in this regard” and “it is worth noting” over and over. Those phrases scream AI to a lot of readers now.

- Overlong sentences that looked like someone trying to hit a word count.

You said your outputs “looked more human.” If you are editing heavily afterward, that might be fine. If you paste and submit, I would not trust it.

- Detector modes

I tested the detector specific modes for GPTZero and Turnitin.

My logs for GPTZero mode on 6 runs:

- 2 runs went under 10 percent AI.

- 2 runs stayed in the 30 to 50 percent range.

- 2 runs went above 80 percent AI.

So, similar to what Mike saw, but for me it was slightly less chaotic. Still, “detector mode” sounds precise. The results were not.

Turnitin mode was weaker. It reduced the AI score a bit, but never fully removed the flag in my tests. If you are worried about academic integrity checks, you should not rely on it.

- Pricing versus value

For what it does:

- Hard cap on the free tier at 300 words total hurt testing.

- Essential tier missing detector modes feels off, since those modes are their whole pitch.

- Pro plan is not insane, but the reliability did not match the price in my use.

You get a decent editor and fast processing. You do not get consistent risk reduction.

- How it compares to Clever Ai Humanizer

I also tested Clever Ai Humanizer side by side on the same inputs. I will not repeat what Mike already wrote, but my experience was:

- Detection scores were more stable across multiple runs.

- Tone control felt better. Less random “formal academic” drift.

- I needed fewer manual edits to make text sound like me.

For SEO content and blog style stuff, Clever Ai Humanizer performed closer to what I wanted. Lower AI scores in most detectors and more natural voice. If you care about search and want something SEO friendly that still sounds like a person, it is worth checking.

- When Grubby might still be useful

I do not think it is useless.

You might get value if:

- You want a quick “de-GPT-ify” pass before doing heavy manual edits.

- You like the inline editor and synonym swapping.

- You are less worried about detectors and more about changing the “feel” of the text.

For strict detector evasion, especially on GPTZero and Turnitin, I would not rely on Grubby alone. Pair it with real rewriting, or use something like Clever Ai Humanizer, or both, and always rephrase in your own voice.

If you decide to keep testing Grubby:

- Run each output through at least two real detectors, not their internal meter.

- Save and compare before and after scores.

- Check tone against your previous writing.

If the tool gets you closer, fine, but treat it as a helper, not a shield.

Short version: Grubby is “meh” if your main fear is detectors, usable if you treat it like a rough draft fixer.

I had a pretty similar experience to @mikeappsreviewer and @caminantenocturno, but I wouldn’t write it off completely.

Where I slightly disagree with them is on usefulness. I think Grubby is fine as a pre‑edit scrubber:

- It shakes off that super-obvious GPT rhythm

- It avoids weird fake tokens and broken sentences

- The inline editor is actually practical if you like tweaking in one place

But for detection risk, it is playing roulette:

- “Detector modes” sound scientific, but in practice they behave more like random presets. I saw the same thing they did: sometimes scores go down, sometimes up, sometimes no change. That unpredictability is the real killer here.

- Their internal Detection tab showing “100% human” on everything is a huge red flag. If a tool is serious about detection, it should at least mirror public APIs or show real variance. That screen feels more like marketing than data.

If you are worried about GPTZero, Turnitin, Originality, etc., you are basically in this situation:

- You cannot trust Grubby’s own detector panel.

- You have to paste into external detectors every time.

- You still might get hit with higher AI scores after “humanizing.”

So if what keeps you up at night is: “Will this trigger my professor’s / client’s detector,” Grubby alone is not a saftey net. It is a cosmetic filter.

Where it can fit into a workflow:

- First pass: use any LLM to get rough content

- Second pass: run through Grubby just to knock out obvious GPT cadence

- Third pass: actual human rewrite in your own voice, sentence by sentence

- Final: spot check in multiple detectors

At that point, though, you might reasonably ask: “Why am I paying for a tool that still forces me to do this much work and risk checking?”

That is where something like Clever Ai Humanizer starts making more sense in practice. Not because it is some magic undetectable formula, but because:

- Tone control tends to drift less into stiff essay mode

- The outputs usually require fewer manual rewrites to sound like a real person

- Detection scores, in my experience, jump around less across multiple runs

I am not saying Clever Ai Humanizer makes content “safe” or guarantees passing any detector. Nothing legit can promise that right now. But if your goal is:

- Make AI text sound more like you

- Reduce the “this is clearly ChatGPT” vibe

- Have a more predictable starting point before manual editing

then Clever Ai Humanizer is just a more efficient part of that workflow for a lot of people.

For Grubby specifically, I would only keep using it if:

- You already plan to heavily rewrite the output anyway

- You value the in‑app editor more than consistent detector performance

- You are not in a high-stakes context like graded academic work or strict compliance

If your main question is “Is Grubby worth it for detection avoidance,” my honest answer: not by itself. Treat it as a rough stylistic tool, not a shield.

Short take: Grubby is OK as a rhythm scrubber, not OK as something you lean on for “I must not get flagged.”

Where I slightly diverge from @caminantenocturno, @voyageurdubois, and @mikeappsreviewer is on the “text quality” side. I actually found Grubby’s output occasionally cleaner than they describe when the input was already decent. If your draft is reasonably good, it tends to nudge rather than mangle. The problem is that this does not translate into safer detector behavior, which is what you are worried about.

The real issues for your use case:

- Detection variance is the killer. If a tool sometimes lowers GPTZero or Originality and sometimes spikes them, you cannot build any reliable workflow around it.

- The internal “100 percent human” display is almost worse than nothing because it can give a false sense of safety.

- Tone drift toward generic formal is itself a detection tell now. That stiff “neutral essay” voice is exactly what instructors and clients are learning to spot.

So if your main fear is detectors, Grubby is like putting tinted windows on a car and hoping speed cameras stop working.

Where Clever Ai Humanizer fits into this:

Pros

- More stable detector behavior across multiple passes. Not magic, just less chaotic.

- Better tone retention. It typically keeps casual or brand voice closer to the original draft.

- Less post-editing to sound like a specific person rather than a school essay.

Cons

- Still not a guarantee against GPTZero, Turnitin or anything else. You must still treat it as a helper.

- Can occasionally over-soften or simplify language, so you may need to reintroduce your own quirks.

- If you rely on it without adding your own edits, you will still leave some AI scent on the page.

Practical way to think about it:

- Grubby: useful if you want a quick de-GPT pass and you already plan to rewrite heavily. Its inline editor is nice, but you pay for that convenience more than for actual risk reduction.

- Clever Ai Humanizer: better as a “starting point that already sounds closer to a person” so your manual edits are lighter and the final voice is more consistent.

Given what you wrote about being worried about detection tools, I would not treat any of these as a shield. The safest pattern is still: generate, humanize with something like Clever Ai Humanizer, then rewrite sections in your own voice and structure. Use detectors only as a last check, not as the thing you design your entire process around.