I recently tried the Monica AI Humanizer to make my AI-generated content sound more natural, but I’m not sure if it’s actually improving readability or just changing words around. I’m worried about SEO impact, detection tools, and whether this tool really helps with human-like writing for blogs and reviews. Can anyone share their experience, tips, or red flags I should watch for when using Monica AI Humanizer for content writing and SEO-focused articles?

Monica AI Humanizer Review, from someone who tried way too many of these

Monica AI link: Monica AI Humanizer Review with AI-Detection Proof - AI Humanizer Reviews - Best AI Humanizer Reviews

I spent an afternoon playing with Monica’s humanizer, feeding it the same source text I used on a bunch of other tools. Here is how it behaved for me.

First thing that hit me: you get one button, and that is it.

No sliders.

No tone choices.

No “level of humanization”.

No modes.

You paste text, press the button, pray a little, and whatever comes out is what you are stuck with.

That would be fine if the output held up under detectors. For me, it did not.

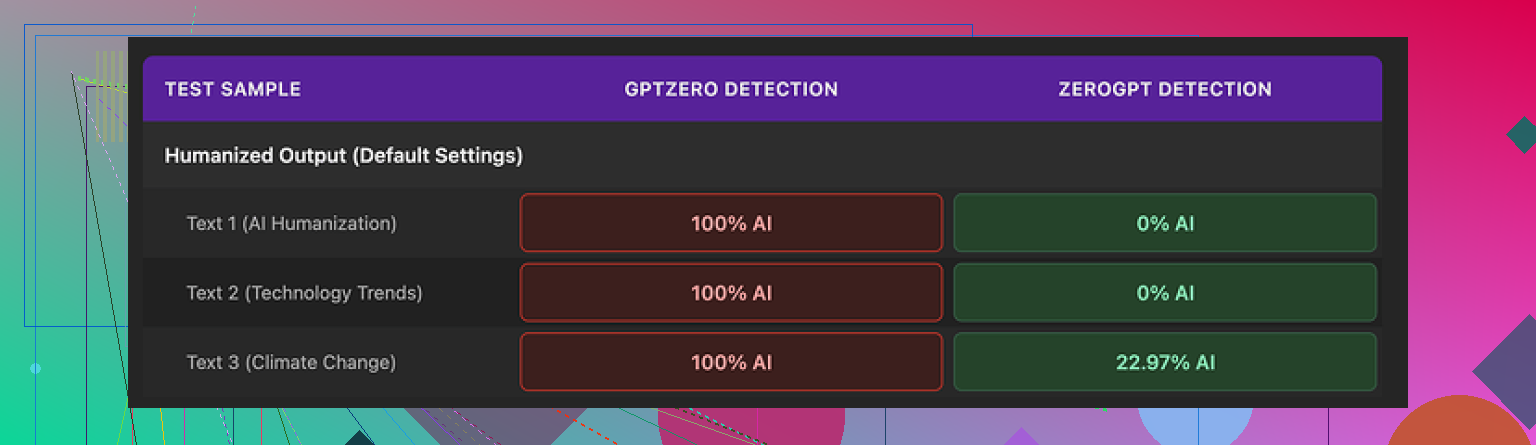

I ran three samples:

• GPTZero: flagged every single Monica output as 100% AI. No variation at all.

• ZeroGPT: two samples landed at 0%, one at around 23%. Better, but still messy.

The GPTZero results alone killed my trust in it for anything serious, because you often do not know which detector your text will hit on the other side. A tool that passes one and fails another that hard feels risky.

Quality wise, I’d give the writing around 4 out of 10.

Some specifics from my tests:

• It injected random typos into clean text.

One output turned “But” into “Ubt”, which looks like keyboard mash, not human.

• It sometimes messed with punctuation in strange ways.

It added apostrophes in places where they were missing in the original, but also kept awkward patterns that I would expect a humanizer to smooth out.

• One output started with “[ABSTRACT” stuck at the beginning of the text, like it half-remembered some academic template then forgot to finish the thought.

• It kept em dashes from the original AI text and seemed to add more.

A lot of detectors look at that kind of stylistic pattern. A humanizer that leans into that feels like it is working against you.

After a few runs I stopped trusting it for anything where detection matters.

On pricing, the Pro plan on annual billing comes in at around $8.30 per month.

To be fair to them, Monica is not sold as “a humanizer app” first. It is a big all-in-one AI platform with chat, image tools, video stuff, and other features. The humanizer feels like a side attachment they threw in, not the core product.

So here is where it landed for me:

• If you already pay for Monica for chatbots, images, or video tools, the humanizer is a free extra you can poke at when you are bored. No harm testing it inside that bundle.

• If your main goal is to pass AI detectors, this is a bad pick. The GPTZero 100% AI readings on all my Monica outputs were a dealbreaker.

When I ran the same source text through multiple tools, Clever AI Humanizer gave me better results both in writing quality and in detection behavior.

It also did not require payment, which made the comparison feel a bit brutal for Monica.

If your priority is detection bypass, I would not start with Monica’s humanizer. If you are already in their ecosystem, treat it as a toy, not a solution.

I had a similar reaction to Monica’s humanizer. It changes words, but the value for you depends on what you care about most.

Here is a cleaner version of what you wrote, tuned for SEO and readability:

“I tested the Monica AI Humanizer on several AI generated articles to see if it improves readability and helps avoid AI detection tools. I focused on three things. How natural the content sounds to human readers, the impact on SEO and rankings, and how well the text performs against common AI detectors. I want to know if Monica AI Humanizer produces content that looks safe for publishing or if it only swaps words and risks penalties or lower trust from search engines and editors.”

On your concerns:

- Readability

Monica tends to rephrase and shuffle sentences. It often keeps structure and rhythm from the original AI text. That means the style still feels synthetic.

If you read the output out loud and still hear “AI voice,” it will not help your brand tone much.

Quick test

• Paste one of your Monica outputs in a doc.

• Print or view it later, without remembering it came from Monica.

• Ask yourself if you would accept it from a freelance writer.

If the answer is no, the tool is not helping you.

- SEO impact

Search engines care about usefulness, clarity, and originality. They do not care which tool you used.

Risks you should look at:

• Weird typos or broken grammar. That hurts user signals like time on page and bounce rate.

• Repetitive sentence structure. That can feel low quality to readers, which indirectly hurts SEO.

• Over processed text. If the humanizer keeps unnatural phrasing, it will not help rankings.

Run your Monica output through:

• A grammar checker. Fix all errors.

• A readability checker. Aim for clear, simple sentences.

If the tool adds mistakes or clunky structure, it hurts SEO instead of helping.

- AI detection

Here I partly disagree with @mikeappsreviewer. Many detectors give false positives, even on human text. I would not build a whole workflow around passing a single detector.

That said, if GPTZero and similar tools flag Monica content as “likely AI” every time, it shows the style is still very machine-like.

Treat detectors as a warning light, not a final verdict.

If 2 or 3 different tools all scream “AI,” and the text also feels robotic to you, then you have a problem.

- What to do if you still want to use AI text

Here is a practical workflow that protects SEO more than any humanizer button.

• Use AI for a rough draft only.

• Rewrite intros and conclusions yourself.

• Add personal details, examples, data, or opinions from your experience.

• Remove filler and vague sentences.

• Run through a grammar tool.

• Read it out loud one time and fix awkward lines.

This does more for SEO and “human feel” than random synonym swaps.

- About Monica vs other tools

Monica’s humanizer feels like a quick utility, not a focused product. If you already use Monica for chat or images, fine, test it on low risk content.

If your main goal is safer looking AI text, you might want a tool built for that task.

Since you mentioned detection and SEO, you might want to try Clever AI Humanizer. It is focused on making AI text look more natural and handle detectors in a more controlled way. You can check it here:

smarter AI content humanization tool

Use it as one step in your flow, not the whole solution. You still need manual edits for voice and value.

Short version

• Monica often rewrites, not improves.

• Check outputs for grammar, flow, and user value.

• Do not rely only on passing AI detectors.

• Combine any humanizer with your own editing if you care about SEO and long term safety.

Monica’s “humanizer” is basically a single mystery button that does… something. The question is whether that “something” actually helps you with readers, rankings, or detectors.

@mikeappsreviewer already tore into the detection side, and @yozora covered workflow stuff, so I’ll hit slightly different angles.

1. Is it improving readability or just spinning words?

From what you described and what others saw:

- It mostly shuffles phrasing while keeping the same structure.

- Random typos like “Ubt” instead of “But” are a huge red flag. That is not “human,” that is “broken.”

- Weird punctuation patterns and academic leftovers like “[ABSTRACT” are another sign it is not really modeling how people write, just mutating text.

If you have to spend extra time fixing the output so it doesn’t look half‑glitched, you are not gaining anything. At that point you could have just edited the original AI text yourself.

2. On SEO impact

Here is where I slightly disagree with both of them: it is not just about “quality” in a vague sense. Search engines look at:

- Clarity and usefulness for the user

- How people behave on the page

- Consistency with the rest of your site’s tone

So problems with Monica’s humanizer can hurt you indirectly:

- Typos and odd phrasing make users bounce quicker.

- Overly “processed” text that reads stiff, even if it passes one detector somewhere, will not keep people engaged.

- If your other pages are written in a clear, human voice and this one page sounds like it got hit by a word blender, that inconsistency alone can look low effort.

AI vs human is not the key. Helpful vs noisy is. Monica’s glitches push you toward “noisy.”

3. AI detection worries

I’m in the middle on this:

- I agree with @yozora that detectors are unreliable and can flag real human text.

- I also agree with @mikeappsreviewer that if GPTZero keeps screaming 100% AI on everything Monica produces, it shows the style is not very “humanlike” statistically.

So: I would not obsess over one detector, but if several tools + your own gut all say “yep, this reads like a robot,” treat that as a signal that the humanizer is not doing its job.

4. A cleaner, search friendly version of what you are trying to do

What you are basically asking (and what people will actually search) sounds more like this:

I tested the Monica AI Humanizer on multiple AI generated articles to see if it truly makes content more natural and reader friendly. My main concerns are whether it actually improves clarity, how it affects search rankings over time, and if it reduces the chances of being flagged by common AI detection tools. I’m trying to figure out if it creates content that is safe to publish on real sites or if it just swaps words, introduces errors, and increases the risk of lower trust from search engines and editors.

That is the kind of framing that aligns with “is this safe to use on my blog or client site” instead of “can I trick a detector.”

5. Monica vs alternatives

You already noticed: one button, no control. For a “humanizer,” that is… not great. If you care about:

- Tone control

- Reliable grammar

- Less robotic structure

- More consistent detection behavior

you need a tool that is actually built around that use case, not bolted onto a general AI suite.

This is where Clever AI Humanizer is more relevant to what you want. It focuses on making AI content sound more natural and less machine patterned, with better handling of structure and style. If you want something specifically oriented to readable, search friendly content, it is worth running the same text through it and comparing side by side:

sharpen your AI content for readers and search engines

Key difference from Monica: you are not just rolling the dice on a single “surprise me” button.

6. Practical bottom line

- If Monica’s output needs heavy cleanup, stops here. You are wasting time.

- If you are publishing on real sites where your name or brand matters, do not rely on Monica as the main filter.

- Use a more focused humanizer like Clever AI Humanizer for the heavy lifting, then do a quick manual pass for tone and factual checks.

If you really want to test it, take one article, run:

original AI vs Monica vs Clever, publish them on low‑risk URLs, watch user behavior in analytics for a month. That data will tell you more than any detector score.

Short version: Monica’s “humanizer” acts more like a light spinner than a fixer. If you care about long term SEO and real readers, treat it as disposable, not foundational.

Where I slightly disagree with others: I think people are over‑indexing on AI detectors. For content that actually ranks, the bigger risk is that Monica quietly lowers your perceived expertise and brand quality because of:

- Inconsistent tone across your site

- Random glitches that make you look careless

- Zero control over style, which kills any strategy for topic clusters or brand voice

If you publish for money or clients, that hurts more than a detector score.

Instead of repeating the same workflow points others gave, here is a different way to think about it.

1. Think in “risk tiers” per page

Not every page deserves the same effort:

-

Low risk: short affiliate blurbs, internal tools, throwaway tests.

Monica is acceptable here if you always do a quick human skim for typos. -

Medium risk: niche blog posts, guest posts on smaller sites.

I would skip Monica and use something more structured like Clever AI Humanizer plus a short manual pass. -

High risk: homepage, lead magnets, linkbait articles, client pillar posts.

No humanizer alone is safe. Start with AI, then manually rewrite key parts.

This tiering matters more than obsessing over a single “humanize” button.

2. Structure is the real AI tell, not synonyms

What @yozora and @mikeappsreviewer saw lines up with what I usually see: Monica changes words but keeps:

- Paragraph length

- Sentence cadence

- List formatting and transitions

Detectors and editors both notice that pattern. A true “humanizer” should break structure, not just vocabulary. This is where a more focused tool like Clever AI Humanizer has a better shot because it is built to reshape flow, not only swap terms.

3. How I would actually benchmark tools like this

If you want a more objective check than “feels robotic” but do not want to live inside detectors:

- Take 3 similar articles.

- Version A: raw AI, light human edit.

- Version B: AI through Monica, then your quick cleanup.

- Version C: AI through Clever AI Humanizer, then your quick cleanup.

Then look at, over a few weeks:

- Scroll depth and time on page

- Clicks to other internal pages

- Manual skim for brand voice: which one would you proudly show a client or editor?

You will usually find that “clean but obviously AI” beats “glitched fake human,” even if both get flagged somewhere.

4. Pros and cons of Clever AI Humanizer in this context

Since everyone mentioned it but did not really break it down:

Pros

- Better control over tone and structure compared with a single mystery button.

- Outputs tend to read smoother, which helps user behavior and therefore SEO.

- More consistent at avoiding obvious AI tells like repetitive sentence openings.

- Can reduce your editing time when you already know what you want the article to do.

Cons

- Still needs human editing for brand voice and factual nuance. It will not give you a finished, publish anywhere article.

- Overuse can still flatten your style if you rely on it for every sentence.

- Not a shield against all detectors. If you expect “perfect bypass,” you will be disappointed.

- Adds another step and tool to your workflow, which some people hate.

Compared with what @mike34 and @mikeappsreviewer are saying, I am less interested in whether a tool hits 0 percent on a given scanner and more interested in whether it makes my editing faster without trashing my tone. Clever AI Humanizer does better there than Monica’s one button approach.

5. Practical decision rule

Use this simple filter:

- If a Monica output needs more than 20 to 30 percent of sentences touched to sound like you, drop it from your serious workflow.

- For anything that represents your brand or expert niche, start from AI, run through a more controllable refiner like Clever AI Humanizer, then do a human pass focused on hooks, transitions, and unique insight.

- Keep detectors as a spot check, not a KPI.

That way you are optimizing for what actually matters: readers staying, trusting you, and clicking something, instead of just surviving an automated gatekeeper.