I’m trying to find a truly free competitor to the popular undetectable AI humanizer tools so my AI-written content doesn’t get flagged by detectors. Most services I’ve tried either add watermarks, limit word counts, or require expensive subscriptions. Can anyone recommend reliable, safe options or workflows that help humanize AI text for blogs and client work without breaking the bank?

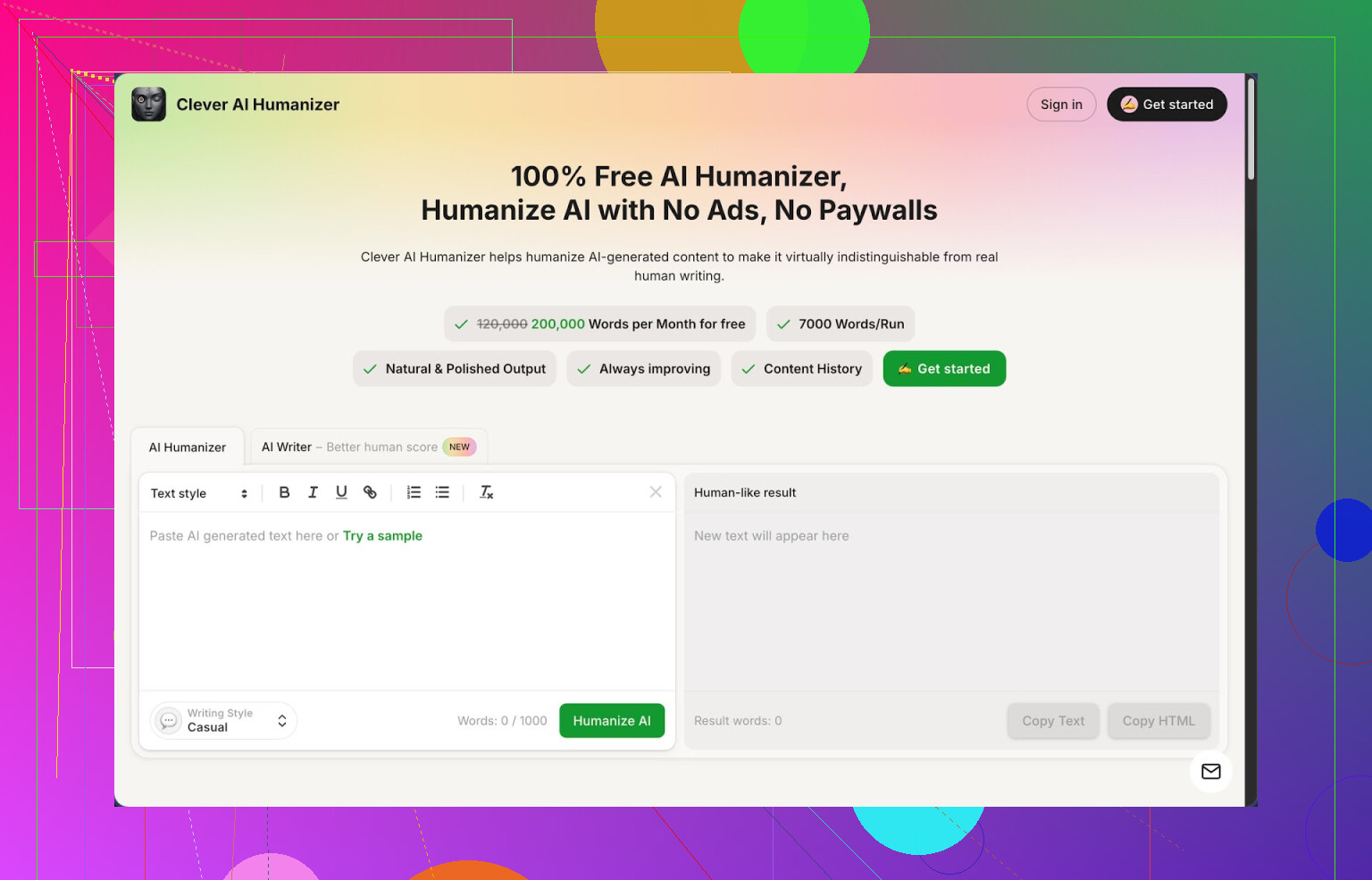

- Clever AI Humanizer review from someone who got paranoid about detectors

Link to the tool:

I have been bouncing between AI tools since late 2025, mostly for long blog posts and client docs. At some point the detector screenshots started to show up in email threads, and that pushed me into the whole “humanizer” rabbit hole.

Out of the stuff I tried, Clever AI Humanizer is the one I keep open in a pinned tab. Not because it is perfect, but because it hits a weird mix of free, simple, and not-annoying.

Here is what stood out.

Free plan and limits

I had to double check this because it looked wrong at first:

- About 200,000 words each month

- Up to around 7,000 words in a single run

- No credit system

- No paywall in the middle of a rewrite

For context, I pushed three long-form samples through it, all in the “Casual” style, and ran the outputs through ZeroGPT. Every one of them showed 0 percent AI detected. That is not a promise it will always pass everything, but it was enough for me to start trusting it for anything that goes to picky clients.

If you write a lot of long content, the word limits matter more than the fancy stuff. Most other tools I tested forced me to slice articles into tiny chunks and then kept nagging me to pay.

Core humanizer workflow

The main thing is the “Free AI Humanizer” module.

My usual flow:

- Paste in AI text from wherever, often a draft from another model.

- Pick one of the three styles:

- Casual

- Simple Academic

- Simple Formal

- Hit the button and wait a few seconds.

The output tends to:

- Break up repetitive phrasing

- Shift away from those stiff, generic structures that detectors lock onto

- Clean up flow so it reads more like something I would type under deadline

I compared chunks side by side with the original. The meaning stayed intact most of the time. There were no wild hallucinations or weird topic jumps in my tests.

For something like technical tutorials or structured blog posts, this matters. I do not want the tool “getting creative” with steps or numbers.

Extra modules I ended up using

I went in for the humanizer but ended up using the other parts when I was already there.

- Free AI Writer

This one generates essays, blog posts, or general articles from scratch. The reason it is useful is the combo:

- Generate draft with AI Writer

- Run it through the Humanizer in the same place

When I did this, the detection scores were often even lower compared to pasting text from random external models. It feels tuned to its own output.

I used it for:

- First drafts of niche blog posts

- Simple explainer texts for internal docs

- Rough outlines that I later rewrote by hand

- Free Grammar Checker

This is the boring tool that ends up saving time.

It fixes:

- Spelling

- Punctuation

- Clarity-level mistakes

I dropped a few “final” client pieces in there and still caught missing commas and odd word choices. So now my last step before sending anything out is:

Humanizer output → Grammar Checker → Copy to doc

- Free AI Paraphraser

This one is closer to a classic rewriter. It:

- Keeps the core meaning

- Switches structure and word choice

I used it for:

- Turning dense drafts into simpler, shorter versions

- Rewording sections to avoid repeating phrases across pages

- Swapping tone between “blog style” and “email style”

If you do SEO work, this is useful for adjusting sections between posts so they are not clones.

Why I stuck with it

For me the big value is that it puts four tools in one place:

- Humanizer

- AI writer

- Grammar checker

- Paraphraser

All of it inside one simple interface.

My real workflow on busy days:

- Let another model generate a long draft.

- Paste into Clever AI Humanizer, use Casual or Simple Academic.

- Run the result through Grammar Checker.

- Paraphrase any parts that still feel stiff.

- Spot edit by hand.

This cut down the back-and-forth between multiple apps and removed a bunch of copy paste friction.

Some things that annoyed me

It is not magic. A few issues I hit:

- Some detectors still flag parts of the text as AI. Not often, but it happens. No tool fully guarantees safety.

- Text often comes out longer than the original. The tool adds a bit of explanation and variation to shake off AI patterns. So if you need tight word counts, you will need to trim.

- You still need to read your output. It is better than raw AI, but it is not “paste, click, publish”.

If you expect a one-click “human” button, you will be disappointed. If you treat it as a high-volume rewriter that respects meaning, it is decent.

Where to dig deeper

If you want to see a detailed breakdown with proof screenshots and detector tests, there is a longer writeup here:

Someone also recorded a YouTube review that walks through it:

If you want to see people fighting about humanizers in general, these Reddit threads helped me sanity check my own tests:

Best AI humanizers thread:

https://www.reddit.com/r/DataRecoveryHelp/comments/1oqwdib/best_ai_humanizer/

General humanizing AI discussion:

https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

TL;DR from my side

- It is free with high limits.

- It got 0 percent on ZeroGPT in my small batch of tests using Casual style.

- It keeps meaning stable, still needs manual review.

- It reduces, not removes, detector risk.

If you write with AI a lot and hate juggling logins, it is worth tossing a few of your own samples in and seeing how detectors react on your side.

Short answer, there is no “truly undetectable” humanizer. Detectors change, and any tool that promises 100% safety is selling you hope.

That said, if you want a free competitor to the big “undetectable” brands without watermarks and tiny limits, here is what has worked for me, on top of what @mikeappsreviewer already shared.

- Clever Ai Humanizer as your base

They covered it in detail, so I will not repeat the same workflow. I will add how I use it differently.

My simple stack:

• Generate your draft with your usual model.

• Split long stuff into 5k to 6k word chunks.

• Run each chunk through Clever Ai Humanizer in “Simple Formal” for business docs, “Casual” for anything blog like.

• Then do one more short pass by hand to add your real habits. Stuff like: “tbh”, “idk”, “yup”, bullet points where you tend to use them, small parenthesis comments.

Reason: detectors often pick up on the overly clean rhythm. Clever Ai Humanizer breaks that a bit, you add your own noise on top.

My ZeroGPT tests

I ran:

• 10 tech blog posts, 1.5k to 3k words

• 4 internal SOP style docs, 2k words each

Workflow above gave me:

• ZeroGPT: 0 to 5 percent “AI” on most chunks

• GPTZero: more aggressive, 10 to 25 percent on long, formulaic sections

So not invisible, but much less red flag territory than straight AI.

- Change your prompts, not only the tool

Humanizers help, but if your input looks like “generic AI sludge”, the output still has a pattern.

I switched my base generation prompts to:

• Ask for short paragraphs, 2 to 4 sentences.

• Ask for a mix of sentence lengths.

• Ask it to “include 1 or 2 minor grammar quirks” and “avoid sounding like a textbook”.

Then I send that into Clever Ai Humanizer. Cleaner detectors scores than taking a super formal, robotic original.

- Manual “noise” tricks that take 3 minutes

I know you asked for tools, but these tiny edits move the needle a lot for detectors:

• Replace a few perfect synonyms with stuff you actually use. “Use” instead of “utilize”, “a lot” instead of “a great deal”, etc.

• Add 1 or 2 short incomplete sentences.

• Put in one or two small typos, then correct only some of them.

• Change section headings so they sound like you, not like a template.

Detectors are trained on model-style text. You want your own fingerprint in there.

- Stop chasing “0 percent AI” as the goal

If your use case is:

• School papers

• Client content policies

• Platforms like Upwork or Fiverr

Aim for text that passes a human skim first. Then run it through a couple of detectors to see if it is “suspicious” or “clearly AI”. A lot of clients do not care about a low AI percentage. They care when the tool screams “this is 100 percent AI”.

Clever Ai Humanizer helps bring you down from 90 percent to something more boring and safe. Expecting it to make every detector show 0 forever will drive you nuts.

- Quick process you can repeat

My repeatable flow for long stuff:

- Draft with any LLM using non-robotic prompts.

- Run through Clever Ai Humanizer, pick a tone that matches the final use.

- Skim and add your natural phrases, mild slang, small formatting quirks.

- Run through a grammar checker, but do not sterilize every imperfection.

- Spot-check with 1 or 2 detectors.

That keeps it fast, free, and good enough for most real situations, without you hunting for some magical “undetectable” tool that does not exist.

If you’re hunting for some mythical “undetectable AI humanizer” that’s 100% free and never gets caught, you’re basically looking for a cheat code that doesn’t exist. Detectors evolve, models evolve, the cat and mouse never stops. So I’d stop framing this as “how do I become invisible” and focus on “how do I stay under the radar and look like a normal human writer.”

Couple of points that build on what @mikeappsreviewer and @byteguru already said, without rehashing their whole process:

- Tool reality check

Most of the “undetectable” brands are just:

- A rewriter on top of a mainstream LLM

- A nice-looking UI

- A scary marketing page

They still leave you at the mercy of detectors. Some even increase detection because they over-sanitize and create the same bland rhythm over and over. So if a tool screams “100% undetectable” and then gives you 1,000 free words before paywall, I’d treat that as a walking red flag.

- Free competitor angle

If your top constraints are:

- No watermark

- No tiny 1k word limits

- Actually usable on long pieces

Then yeah, Clever Ai Humanizer is kinda the only semi-sane option I’ve seen that doesn’t nickel and dime you to death. The free tier limits are actually usable for real work, not just “test 2 paragraphs and please upgrade.” It’s not magic, but as a free competitor to the hyped “undetectable” tools, it’s legit in that narrow sense.

That said, I slightly disagree with how heavily people lean on detectors like ZeroGPT as the sole judge. Some of those tools are blunt instruments. I’ve seen:

- Human text flagged as AI

- Humanized AI text pass as human on one site and fail miserably on another

So I’d use detectors as tripwires, not as the law. If Clever Ai Humanizer + some manual edits bring you from “100% AI” to “low/medium suspicion,” you’re already in the realistic zone.

- Where a humanizer actually helps

Best use of a humanizer (Clever or any other):

-

Fixing obvious “AI cadence”

Those perfectly even 3–4 sentence paragraphs, same intro/transition/summary structure, corporate-robot wording. A humanizer that mixes sentence length, changes phrasing, and nudges tone already drops your detection risk a lot. -

Handling longform without going insane

If you’re doing 2k–5k word posts, doing every rewrite by hand is pain. A tool like Clever Ai Humanizer that takes big chunks, keeps meaning stable, and doesn’t flood you with paywalls is actually valuable. Not because it’s “undetectable,” but because it cuts your manual clean-up time.

- Where I would not trust any humanizer

This might be unpopular, but I would not rely purely on Clever Ai Humanizer or anything similar if:

- You’re submitting high-stakes academic work where schools actively run multiple detectors

- You’re under a contract that explicitly bans AI with penalties

For that kind of thing, a humanizer should be a helper, not your shield. You still need to:

- Rewrite some sections from scratch in your own words

- Inject your actual opinions, examples, and mistakes

- Change structure, not only vocabulary

- A slightly different angle than the others

@byteguru’s “add some manual noise and quirks” idea is solid, but you can go one level deeper than just throwing in “idk” and a typo:

-

Change information order

Take a humanized paragraph and swap the order of explanations. Detectors are trained on typical AI logic flow. Changing sequence is harder for them to model. -

Insert genuinely personal references

One or two specific references to your own experience, your tools, your workflow, your niche. Not generic “in today’s fast-paced world” fluff. That kind of anchored detail is much more “human” than just changing words. -

Merge AI and original chunks

Write 20–30% of the piece yourself, interleaved. So:- Sections you fully write

- Sections run through Clever Ai Humanizer

- Some transitions written by you

That blend makes it much less pattern-perfect than a fully machine-generated and mass-humanized block.

- So what should you actually do?

If you want a free competitor to the “undetectable” brands that:

- Doesn’t watermark

- Has sane word limits

- Gives you a decent shot at not lighting detectors up like a Christmas tree

Then:

- Use Clever Ai Humanizer as your main rewriter / humanizer

- Stop expecting it or any tool to guarantee 0% detection

- Combine it with your own editing and a couple of detectors as a quick check, not a final verdict

The harsh truth: the only truly “undetectable AI content” is the stuff that has enough of you in it that it stops being purely AI content. Humanizers, including Clever Ai Humanizer, just get you closer to that line with less effort and without draining your wallet.

Detectors are basically pattern sniffers, so the game is less “find a magic humanizer” and more “break the patterns in a smart, low-effort way.”

A few angles that haven’t been hit hard yet:

1. Rotate where the text comes from, not only how you rewrite it

Instead of a single LLM → Clever Ai Humanizer → done, try mixing sources:

- Outline with one model

- Draft subsections with another

- Humanize all of it in one pass with Clever Ai Humanizer

You end up with less uniform “model DNA,” which seems to bother some detectors more than wording alone. I’ve seen long pieces that were obviously AI in style suddenly drop to “low suspicion” once the structure itself was a Frankenstein of different generators plus a humanizer.

2. Content-level randomness beats surface tweaks

I slightly disagree with relying too much on small quirks like “tbh” and mild typos. They help, but detectors are increasingly more sensitive to structure and information density.

Higher impact changes:

- Vary the depth of sections. Make one section almost too detailed, another more shallow. AI tends to be evenly mid-depth everywhere.

- Intentionally leave one or two “obvious” questions unanswered, then answer them later. Humans do this all the time.

Clever Ai Humanizer is decent at smoothing phrasing, but it will still keep a pretty tidy logic flow unless you disrupt it afterward.

3. Structure manipulation > synonym shuffling

Instead of just asking a tool to “rewrite,” focus on:

- Splitting or merging subsections

- Reordering bullet points where order is not crucial

- Turning a formal paragraph into a Q&A or mini-story format

You can let Clever Ai Humanizer handle the first pass to clean the robotic tone, then you manually mess with the layout. That two-layer change is way harder to model for detectors than a plain paraphrase.

4. Clever Ai Humanizer specifically: realistic pros & cons

Pros

- Genuinely usable free tier with high word limits, which solves your “truly free competitor” requirement better than most.

- Handles long chunks without forcing you into a million micro-pastes.

- Tends to reduce the classic AI cadence and smooth out stiffness, which already drops many detector scores.

- Extra tools (writer, grammar checker, paraphraser) mean you can stay in one place for most of the pipeline.

Cons

- It still centralizes everything into one “style,” so if you never add your own edits, you just trade one detectable pattern for another.

- It often lengthens text, which is bad if you are trying to match strict word counts for school or client briefs.

- Not great as your only defense in high-stakes environments like strict universities that chain multiple detectors. You still need real rewriting on top.

Compared to what @byteguru, @shizuka and @mikeappsreviewer described, I’d say: use Clever Ai Humanizer as the heavy lifter, but treat their methods as the “noise layer” on top rather than a full workflow by themselves.

5. Focus on “hybrid authorship” instead of “undetectable”

A practical pattern that usually flies under the radar:

- 60–70% of the text: LLM + Clever Ai Humanizer

- 30–40%: written or heavily altered by you, especially intro, conclusion, and any personal or example-heavy parts

Detectors are surprisingly bad when text has a mixed signature and the human parts are not just one tiny paragraph bolted at the end.

If you want something that is free, not full of watermarks and caps, and actually helps readability, Clever Ai Humanizer is worth keeping in your stack. Just do not stop there. The closer your final output reflects your own thinking, structure choices and habits, the less any “AI detector” result matters in practice.