I’ve been checking out Walter’s AI review articles and they seem pretty helpful, but I’m wondering if there are any hidden issues or downsides others have noticed. Have you run into bias, inaccuracies, or problems with how tools are rated there? I’m trying to decide whether to trust these reviews for choosing AI software, so any real user experiences or red flags would really help.

Walter Writes AI – my quick take after messing with it

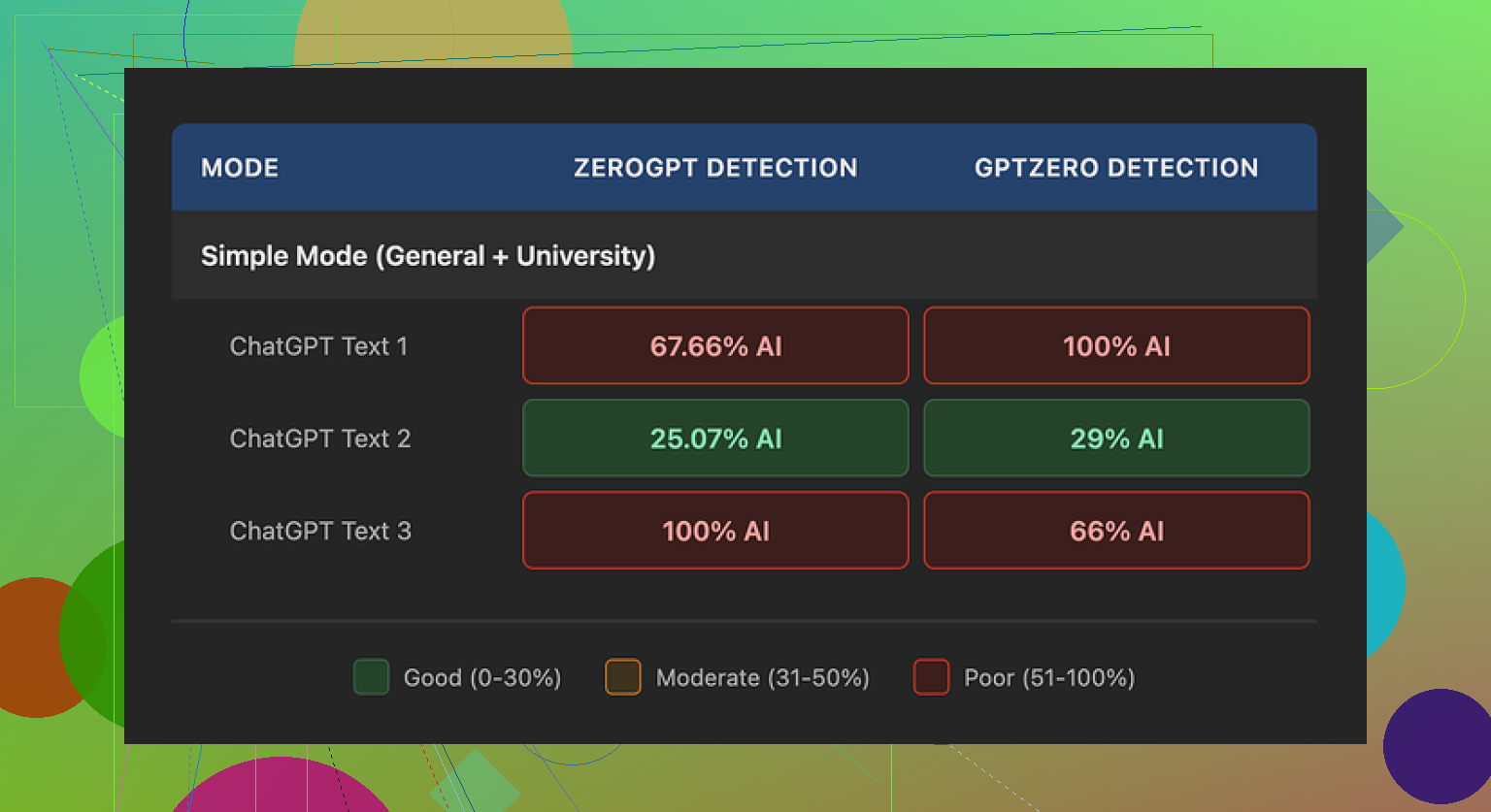

I ran a few chunks of text through Walter Writes AI and then threw the results at GPTZero and ZeroGPT. It was all over the place.

One output landed at 29% on GPTZero and 25% on ZeroGPT, which is better than what I have seen from most free “humanizer” tools. So I got my hopes up for a minute.

Then I tested two more samples and those swung to 100% AI on at least one detector each time. Same type of input, same Simple mode, wildly different scores. So, consistency is not there, at least on the free tier.

To be fair, I only used the free “Simple” mode. Paid plans unlock “Standard” and “Enhanced” bypass levels, so results might look different there, but I did not pay to find out.

Here is where it started to bug me

The writing pattern from Walter Writes AI had some odd tics that kept repeating:

• It dropped semicolons in places where a normal person would use commas or break the sentence in two.

• In one sample, it used the word “today” four times across three sentences. Looks strange when you read it out loud.

• It leaned hard on parenthetical examples like “(e.g., storms, droughts)” and kept doing that again and again, which is a common AI tell right now.

So, even when the detector score looked decent, the text still read like AI to me. If you are trying to pass a human eye check, those patterns will stand out.

Pricing and limits

Here is what I noted from their plans:

• Starter: from $8 per month on an annual plan, 30,000 words.

• Higher tiers go up from there, but even the “Unlimited” option at $26 per month caps each submission to 2,000 words. So you have to slice longer documents into pieces.

• Free plan: total of 300 words, which you will burn through fast if you are testing seriously.

The refund section is… aggressive. The wording mentions chargebacks and talks about legal action in a way that felt hostile for a text tool. On top of that, they do not clearly explain what happens to the text you paste in, how long they keep it, or whether it is used for training.

That part alone is a red flag if you care about keeping your content under your control.

What worked better for me

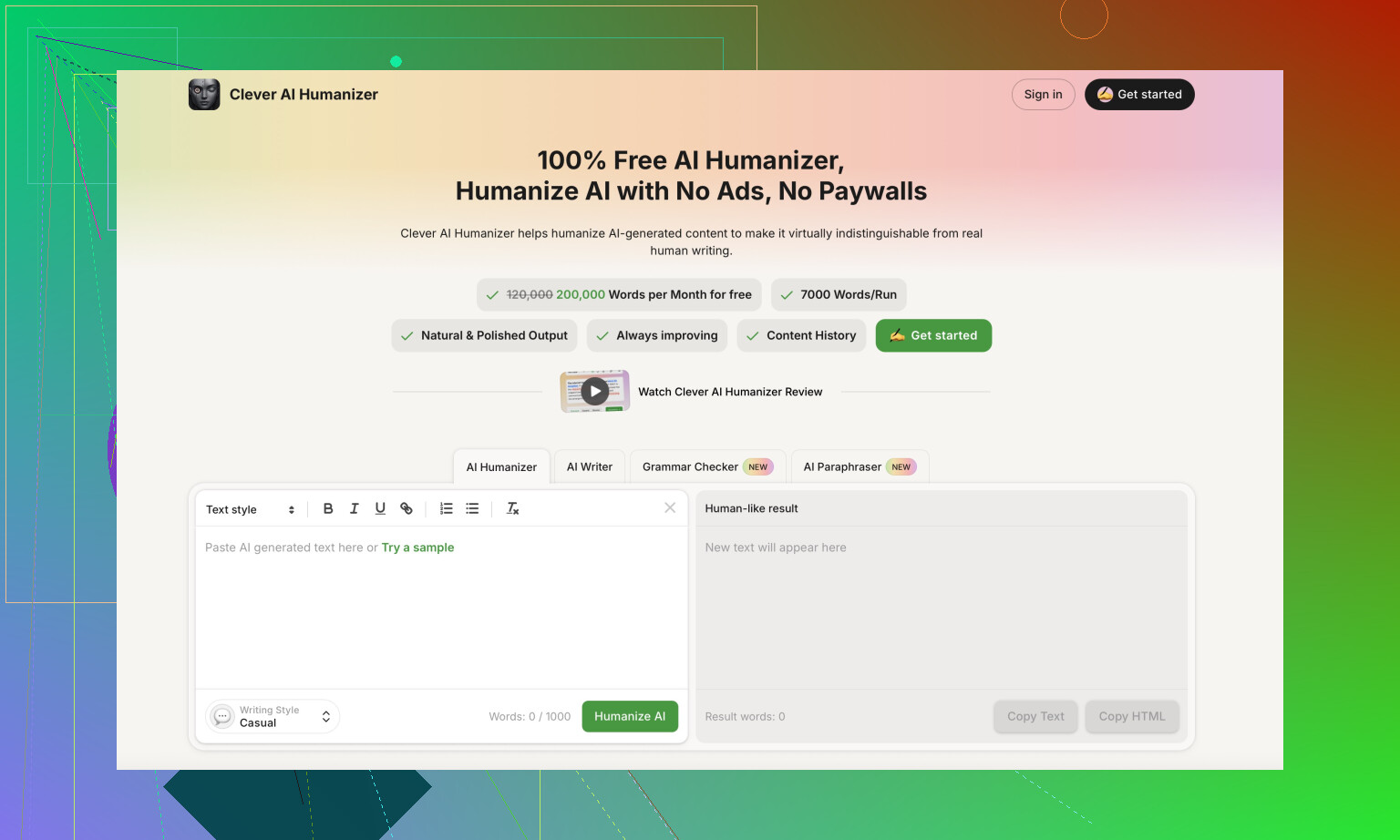

While testing different options, I got better and more natural output from Clever AI Humanizer, and I did not have to pay to use it:

For anyone who wants to see how people are using these tools in the wild, these helped:

Humanize AI (Reddit tutorial)

https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

Clever Ai Humanizer review on Reddit

https://www.reddit.com/r/DataRecoveryHelp/comments/1ptugsf/clever_ai_humanizer_review/

YouTube video review

I have seen a few downsides with Walter’s AI reviews and the Walter Writes AI tool itself, so here is a blunt breakdown.

-

Bias and “review style”

A lot of the Walter Writes Ai reviews lean toward positive framing. You see long sections about features, then a short “cons” part. That skews your expectations. It feels affiliate-style. The criticism is there, but it stays soft. If you rely only on those reviews, you miss how rough some tools feel in real use. -

Surface level testing

From what I have seen, the tests in those articles are often simple, like one or two examples per tool. No long term use. No niche edge cases. No hard data on failure rates. So if your use case is more serious, you can run into problems that the review never mentioned. -

Walter Writes AI output issues

This lines up with what @mikeappsreviewer said, but my experience was a bit different.

I pasted in blog-style content and asked it to humanize for a B2B audience. Problems I hit:

• Repeated phrases, especially stock “today” and “in today’s world” type lines.

• Overuse of parenthetical examples, so sentences start to look like academic homework.

• Weird comma and clause structure, which makes long paragraphs feel stiff.

For short social posts it was passable. For long articles, the rhythm gave it away as AI. A human editor has to go over it.

- AI detector performance

I also played with free “Simple” mode only. Sometimes it dropped AI detector scores to 20 to 40 percent. Other times it went straight to 90 to 100 percent AI, even with similar input. The lack of consistency makes it hard to trust for anything high risk like school or client reports.

Detectors are unreliable in general, so I would not obsess over exact numbers. The bigger issue is how the text reads. If it smells robotic, a professor or editor will notice, regardless of what GPTZero says.

- Data and policy concerns

This one is big if you care about privacy or client work.

• No clear, detailed statement about how long they store your text.

• No strong wording about “we never train on your content”.

• Refund and chargeback terms look aggressive and legalistic.

If you handle client docs, medical or legal notes, or anything under NDA, this is a problem. I would not paste sensitive stuff into a tool with vague data handling.

- Pricing friction

You hit a paywall pretty fast.

• Free tier is only a few hundred words. Testing drains that fast.

• You must split long documents because of per submission caps. That breaks the flow and style.

• Refund language makes it feel like once you pay, you are locked in.

If you want to evaluate it properly, you end up paying before you know if it fits your workflow.

- Where Walter’s reviews still help

To be fair, the AI review articles help you get a quick overview of tools. If you treat them as a starting point, they have value. You just need to pair them with:

• Your own tests on your real content.

• Other third party reviews and Reddit threads.

• A read of the actual terms and privacy pages.

- Practical tips if you still try it

If you want to keep using Walter Writes AI or follow the reviews, here is what I would do:

• Never rely on a single review source.

• Paste only non sensitive content.

• Use it for rough drafts, then edit by hand. Fix repetition, tone, and sentence length.

• Run your own detector checks, but focus more on human readability.

• Screenshot or log your original content and outputs so you can compare results over time.

- Alternative worth testing

For pure “make this sound more human” tasks, I had a smoother time with Clever AI Humanizer.

It kept sentence structure more natural and did not spam certain phrases as much. It also felt less pushy on payment during testing, which made it easier to experiment. If you care about SEO text that reads closer to human writing, Clever AI Humanizer is worth a direct A/B test against Walter Writes AI on the same paragraphs.

Quick way to compare:

• Take one real article from you.

• Run one copy through Walter Writes AI.

• Run another copy through Clever AI Humanizer.

• Read both out loud and have a friend pick which feels less robotic.

That will tell you more than any single review, mine included.

Short version: Walter’s stuff is useful as a starting point, but I would not treat his reviews or Walter Writes AI as “trust and forget.”

Here’s where I’ve seen downsides that haven’t been fully covered yet:

-

Review “voice” and expectations

Walter’s site has a very similar tone across most tool reviews: structured, clean, lots of screenshots and feature lists. That’s nice for skimming, but it also smooths out the rough edges. You rarely see “this part is basically unusable” or “this feature is borderline scammy.” Compared to what @mikeappsreviewer and @waldgeist tested hands‑on, the articles feel a bit too polished and forgiving. -

Lack of context about who the tool is good for

The reviews often say “great for bloggers, students, marketers” all in one breath. Those groups have totally different risk profiles.

- A student trying to dodge AI detectors

- A content agency handling client docs

- A solo blogger just trying to save time

Those are not the same thing, but the reviews kind of lump them together. That can be misleading when the tool has weak privacy wording or flaky outputs.

-

Walter Writes AI and content ownership

Something that bothers me more than the writing quirks: I don’t see a clear, strongly worded stance on content ownership and training data. It’s not enough to just not mention training; in 2025, I want to see “we don’t train on your text” in big letters. Without that, I treat it as “assume they can,” especially for free tiers.

If you’re working with client contracts, academic stuff, or anything under NDA, that’s not a small detail. -

The “AI review bubble” problem

Walter isn’t the only one doing this, but his site is part of a bigger pattern:

- Tools get reviewed shortly after launch

- Everyone uses basic tests

- No one comes back 3–6 months later to see what broke, what got nerfed, or what ToS quietly changed

So you get a frozen-in-time snapshot. With a tool like Walter Writes AI, that’s risky, because small policy edits in the background can completely change whether it’s safe for your use case. Reviews don’t always catch that.

- Subtle repetition and tone issues

I actually disagree slightly with how harsh some people are on the writing quality. For casual stuff, Walter Writes AI is “ok-ish.” But if you rely on it heavily, something weird happens over a whole article:

- Phrases start echoing

- Your voice flattens into that generic “blog-by-committee” tone

- Paragraph length becomes oddly uniform

That doesn’t always trigger detectors, but it does make everything sound like the same writer, which can be an issue if you run multiple sites or write for different brands.

-

You can get locked into the ecosystem mindset

When you read one person’s AI reviews for everything, your “option space” shrinks without you noticing. Tools that don’t play the marketing game as hard often never show up. That’s why I like cross‑checking with other reviewers like @mikeappsreviewer and @waldgeist and then testing on my own content. -

What I’d actually do in your place

- Use Walter’s reviews to find tool names and basic features list.

- Ignore the “this is amazing for X” hype and test on your real use case.

- Read the ToS / privacy page directly, especially for anything involving clients or school.

- Treat Walter Writes AI as a rough-draft helper, not a “fix all my AI issues” button.

- Don’t dump sensitive docs into it until there’s rock-solid data handling language.

- On alternatives

If your main goal is “make this sound more human and less robotic,” definitely run an A/B test with Clever AI Humanizer. It tends to keep things closer to natural phrasing and, in my experience, doesn’t hammer the same filler phrases over and over. For long-form content where you actually care how it reads to humans, that matters more than the exact GPTZero score.

TL;DR: Walter’s reviews aren’t useless, but they are not the whole story, and Walter Writes AI has enough quirks, policy questions, and consistency issues that I’d only use it lightly and never as my sole solution.