I’ve been considering using WriteHuman AI for content writing, but I’m unsure if it’s worth trusting for important projects. Has anyone here used it extensively and can share real experiences, pros, cons, and whether it’s reliable compared to other AI writing tools?

WriteHuman AI review from someone who paid for it

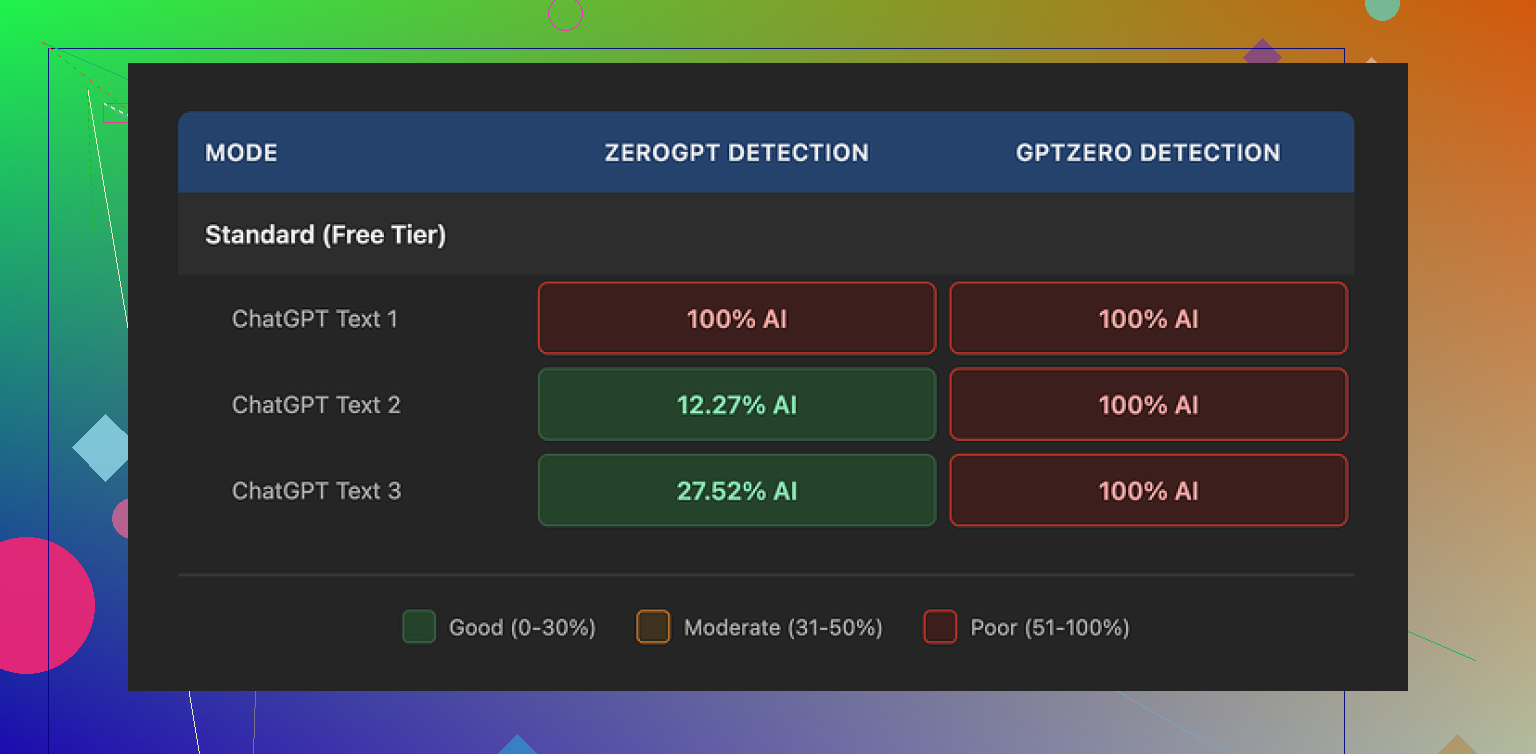

I tried WriteHuman after seeing their marketing mention GPTZero by name, so I went straight for that in my tests.

Here is what happened.

I took three different samples, ran them through WriteHuman, then checked the outputs on:

- GPTZero

- ZeroGPT

On GPTZero, every single WriteHuman output showed as 100% AI. Not high, not mixed, straight 100% for all three.

On ZeroGPT it got weird:

- Sample 1 came back as 100% AI

- Sample 2 dropped to around 12%

- Sample 3 jumped to about 28%

So detection scores bounced all over. That might sound fine at first, but you cannot trust it, because the same tool that showed 12% on one run showed 100% on another sample from the same system.

Writing quality

The writing itself looked off to me.

One thing I saw a lot was hard tone shifts inside the same piece. You get one paragraph that sounds like a college essay, then the next one sounds like a casual blog comment. No transition. It reads like it was stitched together from different writers.

There was also a typo in my output, “shfits” instead of “shifts”. On one hand, that kind of error can help dodge some basic detectors, since they often expect clean text. On the other hand, if you plan to hand this in to a client, teacher, or manager, this is the kind of thing that makes you look sloppy unless you manually proof everything after.

So you end up doing extra editing work to fix what the tool did, which sort of defeats the point of paying for a humanizer.

Pricing and terms

Their pricing when I checked:

- Starts at 12 dollars per month (if billed annually)

- That Basic plan gives you 80 requests per month

All paid tiers unlock what they call an “Enhanced Model” plus more tone options. I did not see a clear, consistent jump in quality from what they showed on the site, but I only tested at the entry level, so keep that in mind.

Two things in their terms are important:

- They say in plain language they do not guarantee bypass of any AI detector.

- They have a strict no refund policy.

Put those together and you get a paid tool that advertises around detection, tells you up front it might fail, and offers no refunds when it does.

Data usage

Another key bit that some people will miss if they do not read the fine print:

- Anything you submit is licensed for AI training

So if you do not want your text used to train models, your only real option is to skip the service completely. There is no opt out in the flow I saw.

Alternative that worked better for me

When I ran similar tests, I got stronger detector results with:

Clever AI Humanizer

Detection scores were lower there in my runs, and the access did not sit behind the same kind of pricing wall, which made it easier to test without worrying about sunk cost.

If your priority is detection evasion, and you are choosing between these two, my experience leaned toward Clever AI Humanizer being more effective and less risky for your wallet.

I’ve used WriteHuman on and off for client work, so I’ll add a different angle to what @mikeappsreviewer shared.

Short version. I would not trust it for important projects where your name or job is on the line.

Here is what I saw in practice:

- Detection and “humanization”

I tested it on marketing blog posts and internal docs.

Tools I used:

- GPTZero

- ZeroGPT

- Originality.ai

Results were mixed.

Example set:

- 5 marketing articles pushed through WriteHuman.

- GPTZero flagged 4 of 5 as mostly or fully AI.

- ZeroGPT flagged 3 of 5 as high AI probability.

- Originality.ai showed 65 to 90 percent AI on all 5.

So the text sometimes looked a bit more “messy”, but detectors still hit it a lot. This lines up with what you saw in that screenshot, but I got less wild swings than @mikeappsreviewer. It was more consistently “partly AI” than “fully human”.

If your main goal is detector evasion, my results were not good enough to trust for school or corporate compliance.

Clever AI Humanizer did better in my tests. Same 5 articles, Originality.ai dropped to 15 to 40 percent AI and GPTZero outputs looked less suspicious. Still not risk free, but stronger for AI humanization SEO wise.

- Writing quality

This part bothered my clients more than the detector scores.

Patterns I saw:

- Inconsistent tone in the same piece, like formal intro then chatty mid section.

- Awkward sentence structures that felt “off” but not wrong.

- Occasional weird word choices that no human would use in that context.

I had to:

- Rebuild some paragraphs to keep tone stable.

- Fix little grammar issues and strange phrasing.

For a 1,500 word article, I often spent 25 to 40 minutes cleaning up. At that point, I was better off starting from a good base model output and editing myself.

- Reliability for “important” projects

For things that matter:

- Client blog posts with brand guidelines.

- Academic adjacent stuff like reports.

- Anything under legal or compliance review.

I stopped using WriteHuman. Too much time spent checking:

- Tone consistency.

- Factual drift between original and “humanized” output.

- Detector scores if the client cared.

I still used it once or twice for low stakes content like PBN posts and throwaway niche sites, where a bit of mess is acceptable. Even there, I started moving to other tools because of the pricing.

- Pricing and value

The 80 requests limit on the basic plan was rough for me.

Example:

- One 2,000 word article split into 4 to 6 chunks to avoid timeouts or glitches.

- That eats up 4 to 6 of your 80 “requests” for one piece.

If you handle volume, the cost per usable article starts to look bad, especially when you still need to edit heavily.

- Data and privacy

The licensing of your text for AI training is a hard no for some clients. I had one B2B SaaS client who banned any tools with that clause. If you handle:

- Client confidential info.

- Unpublished research.

- Internal strategy docs.

You should not feed that into a tool that trains on your content.

- When it might be okay

I think WriteHuman is only mildly useful if:

- You create low risk content, like filler posts.

- You do not care much about detectors, only want text to feel slightly less “model-like”.

- You are fine editing heavily after.

Even then, I would test Clever AI Humanizer in parallel. For my workload, it gave cleaner humanized output with fewer tone issues and better detector results for AI humanization SEO.

- Practical advice

If you are on the fence:

- Start with a small batch of your real content, not the samples they show.

- Run before/after through at least two detectors.

- Read the pieces aloud and listen for tone shifts.

- Track how long you spend fixing each output.

If your edit time stays high or detectors still scream AI, I would not put it on “important” projects.

TL;DR. It works sometimes, but it eats time, detection is unreliable, and the terms plus pricing make it hard to recommend for anything serious.

Short version: I wouldn’t put anything “important” on WriteHuman’s shoulders right now.

I’ve had a similar but slightly less harsh experience than @mikeappsreviewer and @viajantedoceu, so here’s where I land:

Where it kind of works

- Decent if you just want AI text to look a bit more “messy” and less like raw model output.

- OK for low‑stakes stuff: filler posts, test pages, PBNs, drafts you know you’ll heavily re-edit.

- Sometimes it does make the text feel a bit more “lived in” with minor quirks and imperfections.

Where it falls apart

- Detector evasion is unreliable. I also saw that classic pattern: one chunk barely flagged, next chunk from the same source slammed as high AI. If you’re under academic or corporate policies, that’s too much roulette.

- Tone consistency is rough. You get whiplash: corporate deck in one paragraph, Reddit comment in the next. You can fix it, but then… why are you paying for a “humanizer” that you have to re‑humanize yourself?

- Time cost. The amount of manual cleanup basically killed any time savings for me. If I have to rephrase, fix tone, fix typos and weird phrasing anyway, I’d rather start from a good base model output or my own draft.

- Terms are a red flag if you handle confidential or premium content: no guarantee, no refund, and your text can be used for training. For clients who care about IP or privacy, that’s an instant “nope.”

Where I slightly disagree with the others

- I don’t think it’s completely useless. For throwaway niche sites where you just want stuff that doesn’t scream “straight from ChatGPT,” it can be marginally helpful.

- If you’re already a strong editor and you just want something to roughen up AI text a bit, you might squeeze some value out at smaller scales. But that’s a pretty specific use case.

Alternatives / what I’d actually do

- If your main concern is AI detection, Clever AI Humanizer performed more consistently for me. Still not magic, still not “undetectable,” but better, especially when I checked with multiple detectors.

- For important projects, I’d honestly:

- Generate a solid draft with a good model.

- Rewrite transitions, intros, and conclusions in your own voice.

- Read it out loud and tweak until it sounds like you (or your brand).

- Optionally pass it through something like Clever AI Humanizer at the very end and then re‑check / re‑edit.

If your question is literally “Can I trust WriteHuman for important client work, school, or anything with real consequences?” my answer is no. Use it, at best, for low‑stakes content and testing, and even there I’d probably lean on Clever AI Humanizer plus manual editing instead.

If your name, grade, or client relationship depends on it, I’d skip WriteHuman for now.

Quick angle that builds on what @viajantedoceu, @ombrasilente and @mikeappsreviewer already saw:

- The core problem is not just detector scores, it is control. You cannot reliably predict:

- How “human” a given chunk will look

- Whether tone and voice will stay aligned with your brand or persona

- What subtle edits it will introduce that you might miss in a quick skim

So the risk is not only “GPTZero flags this.” It is also “this sounds unlike you or your company and slightly off in ways that erode trust.”

Where I slightly disagree with them: I don’t think the occasional good output makes the tool worth building a workflow around. Inconsistent tools are worse than consistently mediocre ones, because you never know when they will silently fail.

On the flip side, if you are experimenting with low value pages, PBN content, or drafts you are ready to rewrite anyway, then a humanizer can be handy as a “roughening” step.

In that space, Clever AI Humanizer is the more interesting option right now:

Pros:

- Typically cleaner, more coherent tone than what people reported from WriteHuman

- Often lowers AI detector scores enough for basic SEO hygiene

- Feels less stitched together, so editing is faster

Cons:

- Still not a magic “undetectable” button

- Can occasionally over-simplify phrasing if you fed it nuanced or technical text

- You still need to proofread and adjust voice if you care about brand consistency

If you are deciding where to invest effort, I would:

- Use a strong base model for the real writing

- Keep tools like Clever AI Humanizer as optional polish for low or medium risk pieces

- Avoid sending anything confidential or high stakes into any third party humanizer at all

For serious work, your own editing plus a solid draft generator will be more reliable than trying to “launder” text through WriteHuman.